I’m rebooting a startup that I had set aside a year ago. I’ve had some recent research and development advances that make it again seem worth pursuing. Specifically, the improved approach uses a deep learning decision-making filter of sorts to select among natural language generators based on characteristics of the interlocutor’s queries. The channeling to the best generator uses word and phrase cues, while the generators themselves are a novel deep learning framework that integrates ontologies about specific domain areas or motives of the chatbot. Some of the response systems involve more training than others. They are deeper and have subtle goals in responding to the query. Others are less nuanced and just engage in non-performative casual speech.

I’m rebooting a startup that I had set aside a year ago. I’ve had some recent research and development advances that make it again seem worth pursuing. Specifically, the improved approach uses a deep learning decision-making filter of sorts to select among natural language generators based on characteristics of the interlocutor’s queries. The channeling to the best generator uses word and phrase cues, while the generators themselves are a novel deep learning framework that integrates ontologies about specific domain areas or motives of the chatbot. Some of the response systems involve more training than others. They are deeper and have subtle goals in responding to the query. Others are less nuanced and just engage in non-performative casual speech.

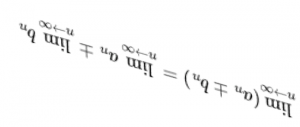

In social and cognitive psychology there is some recent research that bears a resemblance to this and also is related to contemporary politics and society. Well, cognitive modularity at the simplest is one area of similarity. But within the scope of that is the Type 1/Type 2 distinction, or “fast” versus “slow” thinking. In this “dual process” framework decision-making may be guided by intuitive Type 1 thinking that relates to more primitive, older evolutionary modules of the mind. Type 1 evolved to help solve survival dilemmas that require quick resolution. But inferential reasoning developed more slowly and apparently fairly late for us, with the impact of modern education strengthening the ability of these Type 2 decision processes to override the intuitive Type 1 decisions.

These insights have been applied in remarkably interesting ways in trying to understand political ideologies, moral choices, and even religious identity. For instance, there is some evidence that conservative political leanings correlates more with Type 1 processes.… Read the rest