Author: Mark Davis

Against Superheroes: Section 18 (Chapter 15)

The sessions with Sakara were illuminative and intimate. She asked me about what I remembered from before the transformation. I sat in the chair across from her, the susurration of the air conditioning that seemed to feed the field projectors as much as our comfort was a constant presence beneath our discussion. I remembered very little: hints of childhood, more about the dig at Mt. Hasan, bits of Ela’s sexual mystique, strange flashes of schools and lights. Interrogating this past revealed very little new or surprising to me. I was candid about my limitations concerning the changes to my memory. I was also candid about how with the loss of a personal history came, inevitably, a loss of the essentials of being human. We are continuitities of experience. I can’t describe who I am except as part of my memories and the feelings that surround and enervate them. The protracted calamity of religious ideas that Sakara raised, from ethical concerns about harming others to the status of the unborn all unravel with this consideration. A baby is alive but only tentatively human in the strongest sense. A god knows this—can even feel it as a ribbon into the future—but humans just arbitrarily assign categories that are driven by misunderstandings of these cognitive postures.

The sessions with Sakara were illuminative and intimate. She asked me about what I remembered from before the transformation. I sat in the chair across from her, the susurration of the air conditioning that seemed to feed the field projectors as much as our comfort was a constant presence beneath our discussion. I remembered very little: hints of childhood, more about the dig at Mt. Hasan, bits of Ela’s sexual mystique, strange flashes of schools and lights. Interrogating this past revealed very little new or surprising to me. I was candid about my limitations concerning the changes to my memory. I was also candid about how with the loss of a personal history came, inevitably, a loss of the essentials of being human. We are continuitities of experience. I can’t describe who I am except as part of my memories and the feelings that surround and enervate them. The protracted calamity of religious ideas that Sakara raised, from ethical concerns about harming others to the status of the unborn all unravel with this consideration. A baby is alive but only tentatively human in the strongest sense. A god knows this—can even feel it as a ribbon into the future—but humans just arbitrarily assign categories that are driven by misunderstandings of these cognitive postures.

Why were the gods so capricious, she asked me. Why were they so inhuman? They were good human questions but the answer hardly raised above this faint echo of incapacity. If your mind is subsumed in this web of temporal flux, if you recognize the flammability of experience, and where there are other islands of experiences too, like for a human that there is only instead a moving arc of intransitive expectations and plans, then what is left is the broader permamence of an ineffable now.… Read the rest

The Retiring Mind, Part 1: Clouds

I’m setting my LinkedIn and Facebook status to retired on 11/30 (a month later than planned, alas). Retired isn’t completely accurate since I will be in the earliest stage of a new startup in cognitive computing, but I want to bask ever-so-briefly in the sense that I am retired, disconnected from the circuits of organizations, and able to do absolutely nothing from day-to-day if I so desire.

I’m setting my LinkedIn and Facebook status to retired on 11/30 (a month later than planned, alas). Retired isn’t completely accurate since I will be in the earliest stage of a new startup in cognitive computing, but I want to bask ever-so-briefly in the sense that I am retired, disconnected from the circuits of organizations, and able to do absolutely nothing from day-to-day if I so desire.

(I’ve spent some serious recent cycles trying to combine Samuel Barber’s “Adagio for Strings” as an intro to the Grateful Dead’s “Terrapin Station”…on my Line6 Variax. Modulate B-flat to C, then D, then E. If there is anything more engaging for a retiring mind, I can’t think of it.)

I recently pulled the original kitenga.com server off a shelf in my garage because I had a random Kindle Digital Publisher account that I couldn’t find the credentials for and, in a new millennium catch-22, I couldn’t ask for a password reset because it had to go to that old email address. I swapped hard drives between a few Linux pizza-box servers and messed around with old BIOS and boot settings, and was finally able to get the full mail archive off the drive. In the process I had to rediscover all the arcane bits of Dovecot and mail.rc and SMTP configurations, and a host of other complexities. After not finding what I needed there, alas, I compressed the mail collection and put it on Dropbox.

I also retired a Mac Mini, shipping it off to a buy-back place for a few hundred bucks in Amazon credit. It had been a Subversion server that followed-up for kitenga.com, holding more than ten years of intellectual property in stasis.… Read the rest

Free Will and Thermodynamic Warts

The Stone at New York Times is a great resource for insights into both contemporary and rather ancient discussions in philosophy. Here’s William Irvin at King’s College discoursing on free will and moral decision-making. The central problem is one that we all discussed in high school: if our atomistic world is deterministic in that there is a chain of causation from one event to another (contingent in the last post), and therefore even our mental processes must be caused, then there is no free will in the expected sense (“libertarian free will” in the literature). This can be overcome by the simplest fix of proposing a non-material soul that somehow interacts with the material being and is inherently non-deterministic. This results in a dualism of matter and mind that doesn’t seem justifiable by any empirical results. For instance, we know that decision-making does appear to have a neuropsychological basis because we know about the effects of lesioning brains, neurotransmitters, and even how smells can influence decisions. Irving also claims that the realization of the potential loss of free will leaves us awash in some sense of hopelessness at the simultaneous loss of the metaphysical reality of an objective moral system. Without free will we seem off the hook for our decisions.

The Stone at New York Times is a great resource for insights into both contemporary and rather ancient discussions in philosophy. Here’s William Irvin at King’s College discoursing on free will and moral decision-making. The central problem is one that we all discussed in high school: if our atomistic world is deterministic in that there is a chain of causation from one event to another (contingent in the last post), and therefore even our mental processes must be caused, then there is no free will in the expected sense (“libertarian free will” in the literature). This can be overcome by the simplest fix of proposing a non-material soul that somehow interacts with the material being and is inherently non-deterministic. This results in a dualism of matter and mind that doesn’t seem justifiable by any empirical results. For instance, we know that decision-making does appear to have a neuropsychological basis because we know about the effects of lesioning brains, neurotransmitters, and even how smells can influence decisions. Irving also claims that the realization of the potential loss of free will leaves us awash in some sense of hopelessness at the simultaneous loss of the metaphysical reality of an objective moral system. Without free will we seem off the hook for our decisions.

Compatibilists will disagree, and might even cite quantum indeterminacy as a rescue donut for pulling some notion of free will up out of the deep ocean of Irving’s despair. But the fix is perhaps even easier than that. Even though we might recognize that there are chains of causation at a microscopic scale, the macroscopic combinations of these events—even without quantum indeterminacy—becomes only predictable along broad contours of probabilistic outcomes.… Read the rest

Rationality and the Intelligibility of Philosophy

There is a pervasive meme in the physics community that holds as follows: there are many physical phenomena that don’t correspond in any easy way to our ordinary experiences of life on earth. We have wave-particle duality wherein things behave like waves sometimes and particles other times. We have simultaneous entanglement of physically distant things. We have quantum indeterminacy and the emergence of stuff out of nothing. The tiny world looks like some kind of strange hologram with bits connected together by virtual strings. We have a universe that began out of nothing and that begat time itself. It is, in this framework, worthwhile to recognize that our every day experiences are not necessarily useful (and are often confounding) when trying to understand the deep new worlds of quantum and relativistic physics.

There is a pervasive meme in the physics community that holds as follows: there are many physical phenomena that don’t correspond in any easy way to our ordinary experiences of life on earth. We have wave-particle duality wherein things behave like waves sometimes and particles other times. We have simultaneous entanglement of physically distant things. We have quantum indeterminacy and the emergence of stuff out of nothing. The tiny world looks like some kind of strange hologram with bits connected together by virtual strings. We have a universe that began out of nothing and that begat time itself. It is, in this framework, worthwhile to recognize that our every day experiences are not necessarily useful (and are often confounding) when trying to understand the deep new worlds of quantum and relativistic physics.

And so it is worthwhile to ask whether many of the “rational” queries that have been made down through time have any intelligible meaning given our modern understanding of the cosmos. For instance, if we were to state the premise “all things are either contingent or necessary” that underlies a poor form of the Kalam Cosmological Argument, we can immediately question the premise itself. And a failed premise leads to a failed syllogism. Maybe the entanglement of different things is piece-part of the entanglement of large-scale space time, and that the insights we have so far are merely shadows of the real processes acting behind the scenes? Who knows what happened before the Big Bang?

In other words, do the manipulations of logic and the assumptions built into the terms lead us to empty and destructive conclusions? There is no reason not to suspect that and therefore the bits of rationality that don’t derive from empirical results are immediately suspect.… Read the rest

Neutered Inventiveness

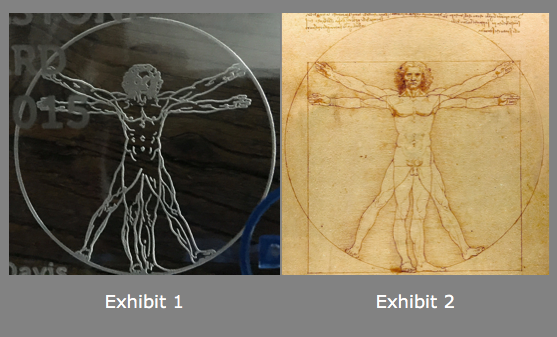

I just received an award from my employer for getting more than five patents through the patent committee this year. Since I’m a member of the committee, it was easy enough. Just kidding: I was not, of course, allowed to vote on my own patents. The award I received leaves a bit to be desired, however. First, I have to say that it is a well-crafted glass block about 4″ x 3″ and has the kind of heft to it that would make it invaluable as a weapon in a game of Clue. That being said, I give you Exhibits 1 and 2:

Exhibit 1 is a cell-phone snap through the glass surface of my award at Leonardo da Vinci’s famous Vitruvian Man, so named because it was a tribute to the architect Vitruvius—or so Wikipedia tells me. Exhibit 2 is an image of the original sketch by da Vinci, also borrowed from Wikipedia.

And now, with only minimal scrutiny, my dear reader can see the fundamental problem in the borrowing and translation of old Vitruvius. While Vitruvius was deeply enamored of a sense of symmetry to the human body, and da Vinci took that sense of wonder as a basis for drawing his figure, we can rightly believe that the presence of all anatomical parts of the man was regarded as essential for the accurate portrayal of man’s elaborate architecture.

My inventions now seem somehow neutered and my sense of wonder castrated by this lesser man, no matter what the intent of the good people in charge of the production of the award. I reflect on their motivations in light of recent arguments concerning the proper role of the humanities in our modern lives.… Read the rest

Magic in the Age of Unicorns

Ah, Sili Valley, my favorite place in the world but also a place (or maybe a state of mind) that has the odd quality of being increasingly revered for abstractions that bear only cursory similarities to reality. Isn’t that always the way of things? Here’s The Guardian analyzing startup culture. The picture in the article is especially amusing to me since my first startup (freshly spun out of XeroX PARC) was housed on Jay street just across 101 from Intel’s Santa Clara campus (just to the right in the picture). In the evening, as traffic jammed up on the freeway, I watched a hawk hunt in the cloverleaf interchange of the Great American Parkway/101 intersection. It was both picturesque and unrelenting in its cruelty. And then, many years later, I would pitch in the executive center of the tall building alongside Revolution Analytics (now gone to Microsoft).

Ah, Sili Valley, my favorite place in the world but also a place (or maybe a state of mind) that has the odd quality of being increasingly revered for abstractions that bear only cursory similarities to reality. Isn’t that always the way of things? Here’s The Guardian analyzing startup culture. The picture in the article is especially amusing to me since my first startup (freshly spun out of XeroX PARC) was housed on Jay street just across 101 from Intel’s Santa Clara campus (just to the right in the picture). In the evening, as traffic jammed up on the freeway, I watched a hawk hunt in the cloverleaf interchange of the Great American Parkway/101 intersection. It was both picturesque and unrelenting in its cruelty. And then, many years later, I would pitch in the executive center of the tall building alongside Revolution Analytics (now gone to Microsoft).

Everything changes so fast, then changes again. If it is a bubble, it is a more beautiful bubble than before, where it isn’t enough to just stand up a website, but there must be unusual change and disruption. Even the unicorns must pop those bubbles.

I will note that I am returning to the startup world in a few weeks. Startup next will, I promise, change everything!… Read the rest

The IQ of Machines

Perhaps idiosyncratic to some is my focus in the previous post on the theoretical background to machine learning that derives predominantly from algorithmic information theory and, in particular, Solomonoff’s theory of induction. I do note that there are other theories that can be brought to bear, including Vapnik’s Structural Risk Minimization and Valiant’s PAC-learning theory. Moreover, perceptrons and vector quantization methods and so forth derive from completely separate principals that can then be cast into more fundamental problems in informational geometry and physics.

Perhaps idiosyncratic to some is my focus in the previous post on the theoretical background to machine learning that derives predominantly from algorithmic information theory and, in particular, Solomonoff’s theory of induction. I do note that there are other theories that can be brought to bear, including Vapnik’s Structural Risk Minimization and Valiant’s PAC-learning theory. Moreover, perceptrons and vector quantization methods and so forth derive from completely separate principals that can then be cast into more fundamental problems in informational geometry and physics.

Artificial General Intelligence (AGI) is then perhaps the hard problem on the horizon that I disclaim as having had significant progress in the past twenty years of so. That is not to say that I am not an enthusiastic student of the topic and field, just that I don’t see risk levels from intelligent AIs rising to what we should consider a real threat. This topic of how to grade threats deserves deeper treatment, of course, and is at the heart of everything from so-called “nanny state” interventions in food and product safety to how to construct policy around global warming. Luckily–and unlike both those topics–killer AIs don’t threaten us at all quite yet.

But what about simply characterizing what AGIs might look like and how we can even tell when they arise? Mildly interesting is Simon Legg and Joel Veness’ idea of an Artificial Intelligence Quotient or AIQ that they expand on in An Approximation of the Universal Intelligence Measure. This measure is derived from, voilà, exactly the kind of algorithmic information theory (AIT) and compression arguments that I lead with in the slide deck. Is this the only theory around for AGI? Pretty much, but different perspectives tend to lead to slightly different focuses.… Read the rest

Machine Learning and the Coming Robot Apocalypse

Slides from a talk I gave today on current advances in machine learning are available in PDF, below. The agenda is pretty straightforward: starting with some theory about overfitting based on algorithmic information theory, we proceed on through a taxonomy of ML types (not exhaustive), then dip into ensemble learning and deep learning approaches. An analysis of the difficulty and types of performance we get from various algorithms and problems is presented. We end with a discussion of whether we should be frightened about the progress we see around us.

Slides from a talk I gave today on current advances in machine learning are available in PDF, below. The agenda is pretty straightforward: starting with some theory about overfitting based on algorithmic information theory, we proceed on through a taxonomy of ML types (not exhaustive), then dip into ensemble learning and deep learning approaches. An analysis of the difficulty and types of performance we get from various algorithms and problems is presented. We end with a discussion of whether we should be frightened about the progress we see around us.

Note: click on the gray square if you don’t see the embedded PDF…browsers vary.… Read the rest

Against Superheroes, Section 15

Back among the Western minds, first in Iceland and then his home country of America, living under a cloak of pretense, Sinister appears more human than ever since his transformation. We note that he has taken on a rather different engagement with his powers that is more nuanced and self-reflective. The gradual transformation from an undiluted, power-mad self into this new form operates at several different levels. First there are the physical transformations that ultimately recompose him into his human form. Then there are the mental acrobatics that allow a new sense of self to emerge out of the rubble of the tempestuous being that drove events at Oasis.

Back among the Western minds, first in Iceland and then his home country of America, living under a cloak of pretense, Sinister appears more human than ever since his transformation. We note that he has taken on a rather different engagement with his powers that is more nuanced and self-reflective. The gradual transformation from an undiluted, power-mad self into this new form operates at several different levels. First there are the physical transformations that ultimately recompose him into his human form. Then there are the mental acrobatics that allow a new sense of self to emerge out of the rubble of the tempestuous being that drove events at Oasis.

We take a moment to try to correlate his rather detailed descriptions of the nature of his powers with known current technologies. He describes the physical world as collections of particles that have deterministic trajectories, for example, and describes his engagement with those particles as affecting that determinism to change both material things as well as affect the “neural” structure of humans themselves. Other Collectives have weighed in on how precisely this technology might work, but the general conclusion is that it closely resembles the bio-essence fields of levels 2 and 3, as well as their control. His misunderstanding of how the technology works is quite understandable; he lacked the educational context that we have.

It is important to consider his statements within the broad contours of his primitive worldview. He views the world as fundamentally composed of small components of material things (atoms and molecules), and he also conceives of mental function as derivative of the motions of these things. We know this to be a primitive and fundamentally bizarre way of comprehending reality.… Read the rest