In Fukuyama’s The Origins of Political Order, the author points out that Chinese feudalism was not at all like European feudalism. In the latter, vassals were often unrelated to lords and the relationship between them was consensual and renewed annually. Only later did patriarchal lineages become important in preserving the line of descent among the lords. But that was not the case in China where extensive networks of blood relations dominated the lord-vassal relationship; the feudalism was more like tribalism and clans than the European model, but with Confucianism layered on top.

In Fukuyama’s The Origins of Political Order, the author points out that Chinese feudalism was not at all like European feudalism. In the latter, vassals were often unrelated to lords and the relationship between them was consensual and renewed annually. Only later did patriarchal lineages become important in preserving the line of descent among the lords. But that was not the case in China where extensive networks of blood relations dominated the lord-vassal relationship; the feudalism was more like tribalism and clans than the European model, but with Confucianism layered on top.

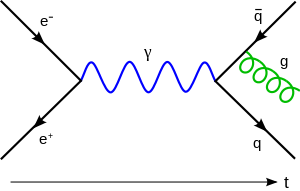

So when E.O. Wilson, still intellectually agile in his twilight years, describes the divide between kin selection and multi-level selection in the New York Times, we start to see a similar pattern of explanation for both models at far more basic level than just in the happenstances of Chinese versus European cultures. Kin selection predicts that genetic co-representation can lead an individual to self-sacrifice in an evolutionary sense (from loss of breeding possibilities in Hymenoptera like bees and ants, through to sacrificial behavior like standing watch against predators and thus becoming a target, too). This is the traditional explanation and the one that fits well for the Chinese model. But we also have the multi-level selection model that posits that selection operates at the group level, too. In kin selection there is no good explanation for the European feudal tradition unless the vassals are inbred with their lords, which seems unlikely in such a large, diverse cohort. Consolidating power among the lords and intermarrying practices possibly did result in inbreeding depression later on, but the overall model was one based on social ties that were not based on genetic familiarity.… Read the rest