In the New York Times Stone column, James Blachowicz of Loyola challenges the assumption that the scientific method is uniquely distinguishable from other ways of thinking and problem solving we regularly employ. In his example, he lays out how writing poetry involves some kind of alignment of words that conform to the requirements of the poem. Whether actively aware of the process or not, the poet is solving constraint satisfaction problems concerning formal requirements like meter and structure, linguistic problems like parts-of-speech and grammar, semantic problems concerning meaning, and pragmatic problems like referential extension and symbolism. Scientists do the same kinds of things in fitting a theory to data. And, in Blachowicz’s analysis, there is no special distinction between scientific method and other creative methods like the composition of poetry.

In the New York Times Stone column, James Blachowicz of Loyola challenges the assumption that the scientific method is uniquely distinguishable from other ways of thinking and problem solving we regularly employ. In his example, he lays out how writing poetry involves some kind of alignment of words that conform to the requirements of the poem. Whether actively aware of the process or not, the poet is solving constraint satisfaction problems concerning formal requirements like meter and structure, linguistic problems like parts-of-speech and grammar, semantic problems concerning meaning, and pragmatic problems like referential extension and symbolism. Scientists do the same kinds of things in fitting a theory to data. And, in Blachowicz’s analysis, there is no special distinction between scientific method and other creative methods like the composition of poetry.

We can easily see how this extends to ideas like musical composition and, indeed, extends with even more constraints that range from formal through to possibly the neuropsychology of sound. I say “possibly” because there remains uncertainty on how much nurture versus nature is involved in the brain’s reaction to sounds and music.

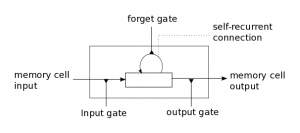

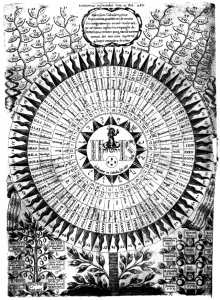

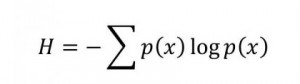

In terms of a computational model of this creative process, if we presume that there is an objective function that governs possible fits to the given problem constraints, then we can clearly optimize towards a maximum fit. For many of the constraints there are, however, discrete parameterizations (which part of speech? which word?) that are not like curve fitting to scientific data. In fairness, discrete parameters occur there, too, especially in meta-analyses of broad theoretical possibilities (Quantum loop gravity vs. string theory? What will we tell the children?) The discrete parameterizations blow up the search space with their combinatorics, demonstrating on the one hand why we are so damned amazing, and on the other hand why a controlled randomization method like evolutionary epistemology’s blind search and selective retention gives us potential traction in the face of this curse of dimensionality.… Read the rest

Research can flow into interesting little eddies that cohere into larger circulations that become transformative phase shifts. That happened to me this morning between a morning drive in the Northern California hills and departing for lunch at one of our favorite restaurants in Danville.

Research can flow into interesting little eddies that cohere into larger circulations that become transformative phase shifts. That happened to me this morning between a morning drive in the Northern California hills and departing for lunch at one of our favorite restaurants in Danville.